First I am going to talk a little about lung anatomy and then proceed to explain what could happen when you perform a lung biopsy. Imagine you push your fist into an inflated balloon. What happens is that you have one layer of balloon at immediate contact with your fist, and the rest of the balloon at immediate contact with the air outside. Between the two layers is the air "inside the baloon". The situation of the fist is analogous to how your lung is located inside the thoracic cavity. The balloon is called the pleura. Its layer in contact with the lung is called the visceral pleura, and the other one in contact with the thoracic cavity is called the parietal pleura. Between these two layers exists the pleural fluid which is what keeps the lungs inflated due to its surface tension. So now you may guess what happens if a needle gets into the pleural space. Air can enter this area (called pneumothorax) and the patient may very well get a collapsed lung. When a radiologist detect a suspisious nodule on a lung CT, and s/he thinks a biopsy is necessary, a fine needle will be inserted between the ribs, pass the pleural space and ultimately reach the nodule. In 25% of the cases the patient will get a pneumothorax, but 98% of them recover on their own. Meaning that the pleura recover on its own and lungs will be inflated (Note: the collapse is not due to the needle reaching the lung parenchyma itself, but the pleural space). However, in the remaining 2% (which includes people who are either very old or have other lung complications such as emphysema) the radiologist has to insert a tube in the lung and pump the lung back up. The tube is small, with a daimeter close to a spagetti nuddle.

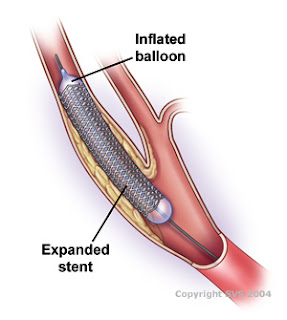

Switching gears, I want to talk about some exciting interventional radiology, which is basically collecting images by insering catheders inside arteries and veins while patient is alert (minimally invasive with local anesthesia). Among the cases that I have observed, I think the most interesting one was IVC filter placement. IVC stands for inferior vena cava which is the major vein that carry de-oxygenated blood from legs and lower body to the right ventricle of heart. The filter is a little guy that looks like an umbrella without the cloth on top ofcourse and it is placed inside the vein to prevent entry of plaques and clots into the right ventricle and further blockage of pulmonary arteries which carry de-oxygenated blood to the lungs for oxygen exchange. The filter is shown bellow. The way the procedure works is that the interventional radiologist attach the hook of the filter to the catheder, and then insert the catheder in the femoral vein. There is sheath inside the filter that once pulled, makes it open up like an umbrella. When they inject the contrast and make sure that the filter is at the right place, they pull the cathder and sheath and make sure the filter is in place. It is interesting to know that you only feel pain on the surface of your skin and surface of the abdominal cavity. That's why if they place a catheder inside your veins and arteries you probably won't feel anything!

aafp.org

aafp.org